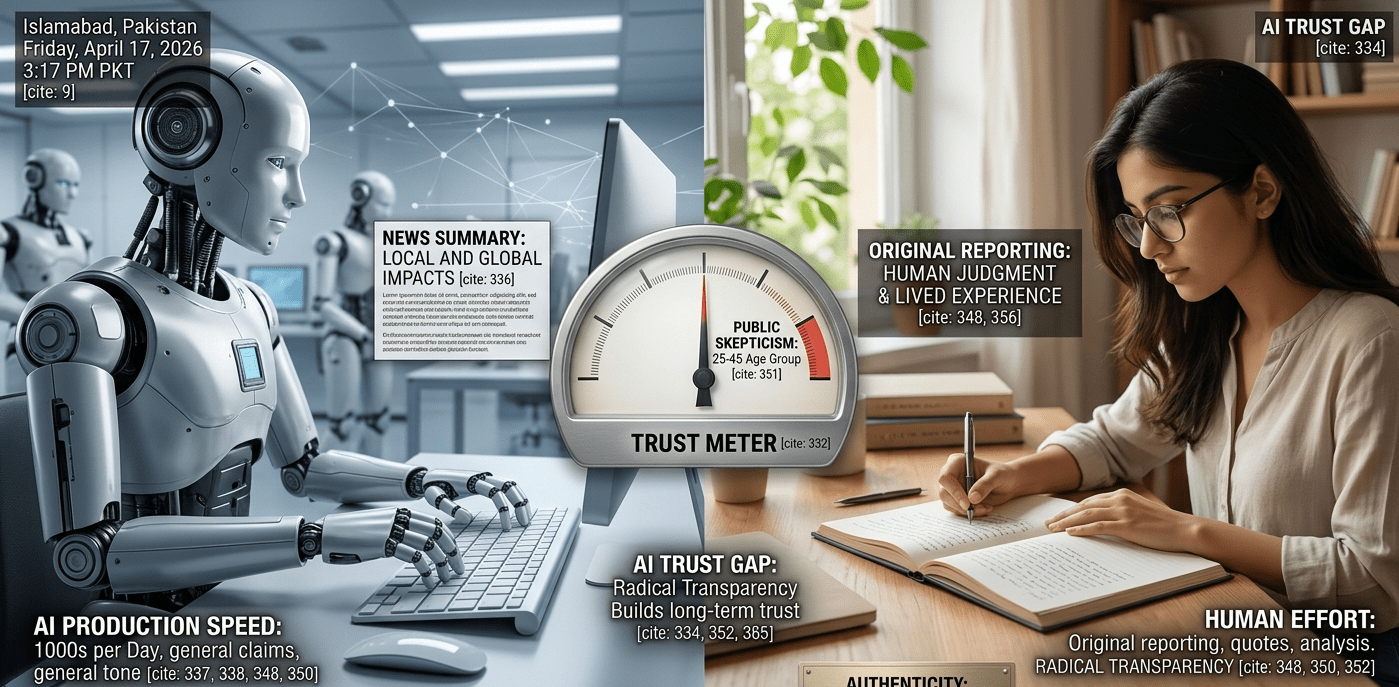

The internet in 2026 is drowning in content. What most readers haven’t fully registered is that a growing percentage of what they read — from news summaries to health articles — was written by an algorithm, not a person. This shift has created what researchers now call the ‘AI trust gap’: a widening disconnect between content volume and content credibility.

How Much Online Content Is AI-Generated in 2026?

A 2025 study by NewsGuard found that AI-generated content now accounts for approximately 20% of all indexed web articles — with that percentage significantly higher in product reviews, local news aggregation, and financial summaries. The pace of production is the key variable: a human journalist produces 1–3 quality articles per day. An AI content pipeline can produce thousands.

Can Readers Actually Tell the Difference Between AI and Human Writing?

Research from Stanford’s Human-Computer Interaction Group in 2024 found that readers correctly identified AI-generated text only 53% of the time — barely better than chance. More troublingly, when AI content was presented in a credible format with proper bylines and publication design, readers rated it as more trustworthy than equivalent human-written content. Readers are evaluating credibility by presentation quality, not source authenticity.

What Is the AI Trust Gap and Why Does It Matter?

The AI trust gap describes the mismatch between the credibility readers assign to content and the actual reliability of that content’s sourcing and reasoning. It matters because misinformation at scale becomes infrastructural. When AI-generated health content recommends ineffective treatments, or AI financial content describes non-existent strategies, the errors are replicated millions of times across republished and scraped versions of the original.

How Are Readers Responding to AI Content Saturation?

Readers are developing new trust heuristics — informal signals to evaluate content quality in the absence of verifiable authorship. The signals gaining importance include author credentials with verifiable professional histories, publication track records, specificity of claims (vague generalities are increasingly associated with AI generation), and original reporting — quotes, exclusive data, first-person observation — that algorithms cannot fabricate.

What Should Publishers and Brands Do About AI Content Trust?

Publications navigating this well are doing three things: radical transparency (disclosing when AI tools assist in research or drafting), prioritizing original reporting AI cannot replicate, and investing in author identity — profiles, credentials, and human voices readers can verify independently. Brands using undisclosed AI content increasingly trigger reader skepticism, especially among audiences aged 25–45. Transparency, counterintuitively, builds more trust than concealment.

How Does This Change the Future of Online Content?

The paradox of AI content saturation is that it makes genuinely human-authored content more valuable. When human perspective, original research, and lived experience become rare in a sea of algorithmic output, they command attention and trust that cannot be bought through volume. Writers, journalists, and creators who invest in demonstrable expertise and honest voice are not competing with AI content — they are offering something it cannot produce: authentic human judgment.

FAQs

Q: How common is AI-generated content online in 2026?

A: Estimates suggest AI-generated content accounts for roughly 20% or more of indexed web articles, with significantly higher percentages in product reviews and local news.

Q: Can readers tell when content is AI-generated?

A: Research suggests readers identify AI content only slightly better than chance — and often rate professionally formatted AI content as more trustworthy than equivalent human writing.

Q: What signals indicate high-quality, human-written content?

A: Verifiable author credentials, original reporting, specific rather than vague claims, and transparent editorial standards are the strongest indicators.

Q: Should brands disclose when they use AI for content?

A: Yes — transparency about AI assistance builds more long-term trust than concealment, particularly among digitally literate audiences increasingly able to identify AI content.